The hypothesis tests and confidence intervals are inaccurate.Įxamine the normal plot of the residuals to identify non-normality.

When variance increases as a percentage of the response, you can use a log transform, although you should ensure it does not produce a poorly fitting model.Įven with non-constant variance, the parameter estimates remain unbiased if somewhat inefficient. You should consider transforming the response variable or incorporating weights into the model. If the points tend to form an increasing, decreasing or non-constant width band, then the variance is not constant. You might be able to transform variables or add polynomial and interaction terms to remove the pattern. The points form a pattern when the model function is incorrect.

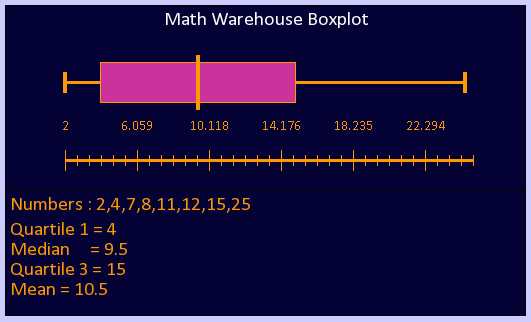

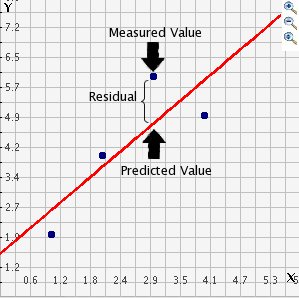

We can simply check the scatterplot for visual inspection of the assumption of linearity.It is important to check the fit of the model and assumptions – constant variance, normality, and independence of the errors, using the residual plot, along with normal, sequence, and lag plot. Can we predict the strength from the measurement values of these parameters? Scatterplot of variables to check for linearity The concrete compressive strength is a highly complex function of age and ingredients. We are using the concrete compressive strength prediction problem from the UCI ML portal. The entire code repo for this example can be found in the author’s Github. Example of regression model quality evaluation This is the visual analytics needed for the goodness-of-fit estimation of a linear model.Īpart from this, multicollinearity can be checked from the correlation matrix and heatmap, and outliers in the data (residual) can be checked by so-called Cook’s distance plots. Therefore, the proxy of true errors is the residuals, which are just the difference between the observed values and the fitted values.īottom line - we need to plot the residuals, check their random nature, variance, and distribution for evaluating the model quality. We can only estimate and draw inference about the distribution from which the data is generated. We can never know the true errors, no matter how much data we have. Outliers can also be an issue impacting the model quality by having a disproportionate influence on the estimated model parameters.īut there is a piece of bad news. This assumption assumes minimal or no linear dependence between the predicting variables. However, without this assumption being satisfied, you cannot calculate the so-called ‘confidence’ or ‘prediction’ intervals easily as the well-known analytical expressions corresponding to Gaussian distribution cannot be used.įor multiple linear regression, judging multicollinearity is also critical from the statistical inference point of view. Note, this is not a necessary condition to perform linear regression unlike the top three above. Normality: The errors are generated from a Normal distribution (of unknown mean and variance, which can be estimated from the data).Homoscedasticity (constant variance): The variance of the errors is constant with respect to the predicting variables or the response.Independence: The errors (residuals of the fitted model) are independent of each other.you can still model f(x) = ax² + bx + c, using both x² and x as predicting variables. Linearity: The expected value of the dependent variable is a linear function of each independent variable, holding the others fixed (note this does not restrict you to use a nonlinear transformation of the independent variables i.e.The four key assumptions that need to be tested for a linear regression model are, A brief overview of linear regression assumptions and the key visual tests The assumptions

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed